Artificial Intelligence

I like the dreams of the future better than the history of the past.

A perfect storm of change fuelled by digitization, mobilization and automation is turning science fiction to science fact, and forcing enterprises to digitally transform.

In today’s world, strategies can no longer be based on descriptive analytics, rather businesses need to push the limits of their traditional BI and analytics frameworks with predictive and prescriptive capabilities to generate faster and more accurate business outcomes.

AI – Artificial Intelligence

Artificial intelligence (AI) is an umbrella that covers all the methods, algorithms and technologies that make devices and software act intelligently. AI was a dream that appeared in 1956 but did not materialize until the explosion of Big Data and advance data technologies combined with the evolution in computing and storage technologies at lower costs.

AI is now seeping its way into our lives, affecting how we live, work and entertain ourselves. There are several examples and applications of artificial intelligence in use today, from voice-powered personal assistants like Siri and Alexa, to more underlying and fundamental technologies such as suggestive searches and autonomously-powered self-driving vehicles.

But where does all the intelligence come from?

Machine Learning

The realization that computers could be programmed to learn themselves and the explosion of available training data accelerated the evolution of Machine Learning, referred to as a subset of AI or “Narrow AI”.

Machine learning refers to the deployment of algorithms and methodologies that when combined with large training data improve their performance and knowledge. Commonly used machine-learning techniques include neural networks, decision trees, Bayesian networks, k-nearest neighbors, regression techniques and many more.

From "what is happening?" or "what has happened?"

To "what will happen next?"

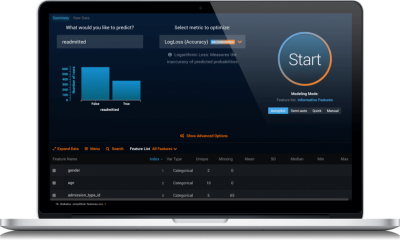

DataRobot

The world’s most advanced enterprise automated machine learning platform.

DataRobot captures the knowledge, experience, and best practices of the world’s leading data scientists, delivering unmatched levels of automation and ease-of-use for machine learning initiatives. DataRobot enables users to build and deploy highly accurate machine learning models in a fraction of the time it takes using traditional data science methods.

Accuracy

While automation and speed usually come at the expense of quality, DataRobot uniquely delivers on all those fronts. The DataRobot platform automatically searches through millions of combinations of algorithms, data preprocessing steps, transformations, features, and tuning parameters for the best machine learning model for your data. Each model is unique, fine-tuned for the specific dataset and prediction target.

Speed

DataRobot features a massively parallel modeling engine that can scale to hundreds or even thousands of powerful servers to explore, build and tune machine learning models. Large datasets? Wide datasets? No problem. The speed and scalability of modeling is limited only by the computational resources at DataRobot’s disposal. With all this power, the work that used to take months is now finished in just hours.

Ease-of-Use

The intuitive web-based interface allows anyone to interact with a very powerful platform, regardless of skill-level and machine learning experience. Users can drag-and-drop then let DataRobot do all the work or they can write their own models for evaluation by the platform. Built-in visualizations, such as Feature Effect, Feature Fit, and Feature Impact, offer the deepest insights and a whole new understanding of your business.

Ecosystem

Keeping up with the growing ecosystem of machine learning algorithms has never been this easy. DataRobot is constantly expanding its vast set of diverse, best-in-class algorithms from R, Python, H20, Spark, and other sources, giving users the best set of tools for machine learning and AI challenges. With a simple click of the Start button, users can deploy techniques they have never used before or may not even be familiar with.

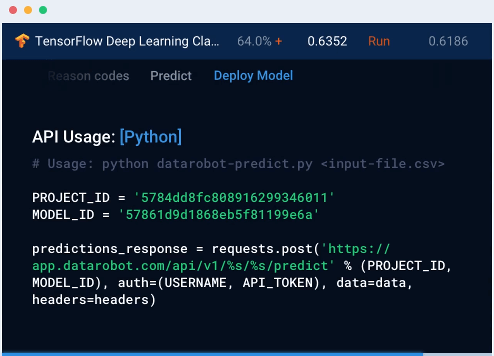

Industrialization

Whether you’re building a modelling factory or a highly scalable real-time application, DataRobot allows true industrialization of machine learning. With the DataRobot API, create, explore and refresh 100s or even 1000s of models with just a few lines of scripting code. Ready to deploy? The Prediction API makes it easy to operationalize models in Syncrasy Data Flow pipelines with just a few lines of code — no need for scoring code. Ever.

Rapid Deployment

The best machine learning models have little to no organizational value unless they are rapidly operationalized within the business. With DataRobot's automated machine learning platform, deploying models can be done with a few mouse-clicks. Not only that, every model built by DataRobot publishes a REST API endpoint, making it a breeze to integrate within modern enterprise applications. Organizations can now derive business value from machine learning in minutes, instead of waiting months to write scoring code and deal with the underlying infrastructure.

The tip of the iceberg

Machine Learning and Artificial Intelligence technologies are only “the tip of the data processing iceberg”.

The deep substance is what is below the water line.

Before Machine Learning and Artificial Intelligence technologies can usefully be deployed, enterprises need to access, curate and store all of their internal and external data sources, including previously disregarded data.

Unfortunately, for most of large enterprises meaningful raw data tends to reside in line of business systems across multiple business units, and often with overlapping and conflicting information. In addition, legacy databases, ETL and storage systems are not technologically consistent with Machine Learning and Artificial Intelligence technologies due to an explosion of data types and volumes, as well as the commercial requirement for actions based on real-time information.

To be able to think about deploying Machine Learning and Artificial Intelligence enterprises must first establish “the deep substance” - the underlying databases and applications capable of delivering the data to Machine Learning and Artificial Intelligence processes together with the computing and storage architectures to support it.

Underpin your data science initiatives with Syncrasy’s powerful, enterprise-ready platform.

Syncrasy’s Platform Core Technology

Fault-tolerant, distributed and extensible by design, Syncrasy’s Platform Core efficiently manages elastically scalable COTs based computing resources, automates resource balancing to meet application requirements and supports full redundancy and high-availability of all deployed databases and applications.

Syncrasy’s Software Defined Storage

Syncrasy’s COTs based Software Defined Storage Solutions provide low cost, highly scalable options to store traditional and new data types and volumes with no vendor lock-in whilst at the same time allowing the re-utilization of existing legacy storage infrastructure.

Syncrasy’s Run Anywhere Technology

Fully optimized for bare metal, virtualized, cloud or hybrid infrastructures, Syncrasy’s Run Anywhere Technology lays the foundation needed to flexibly initiate and migrate these innovations with minimum investment and maximum scalability.

Syncrasy’s Chameleon Technology

Syncrasy’s Chameleon Technology enables the plug-ability of the modern, data centric applications needed to manage all data types and volumes, from connection through transformation and to delivery into data stores, data analytics and machine learning processes, data visualization tools and potentially triggering the automation of actions needed to optimize enterprise performance.

Syncrasy’s Virtualization Technology

A lightweight, low cost alternative to full machine virtualization, Syncrasy’s Virtualization Technology

delivers high availability, live migration and automatic backup & restore for both modern cluster based applications as well as existing line of business applications.

Syncrasy’s Pluggable Applications

New data centric applications are appearing every day and no single technology is fit for every purpose, which is why Syncrasy incorporates pluggable choices from today’s best of breed applications across the whole data processing spectrum, and is committed to adding new technologies as they advance.